Is ChatGPT Sharing Your Data With the Military? The Story Behind 2.5 Million People Quitting OpenAI

TL;DR

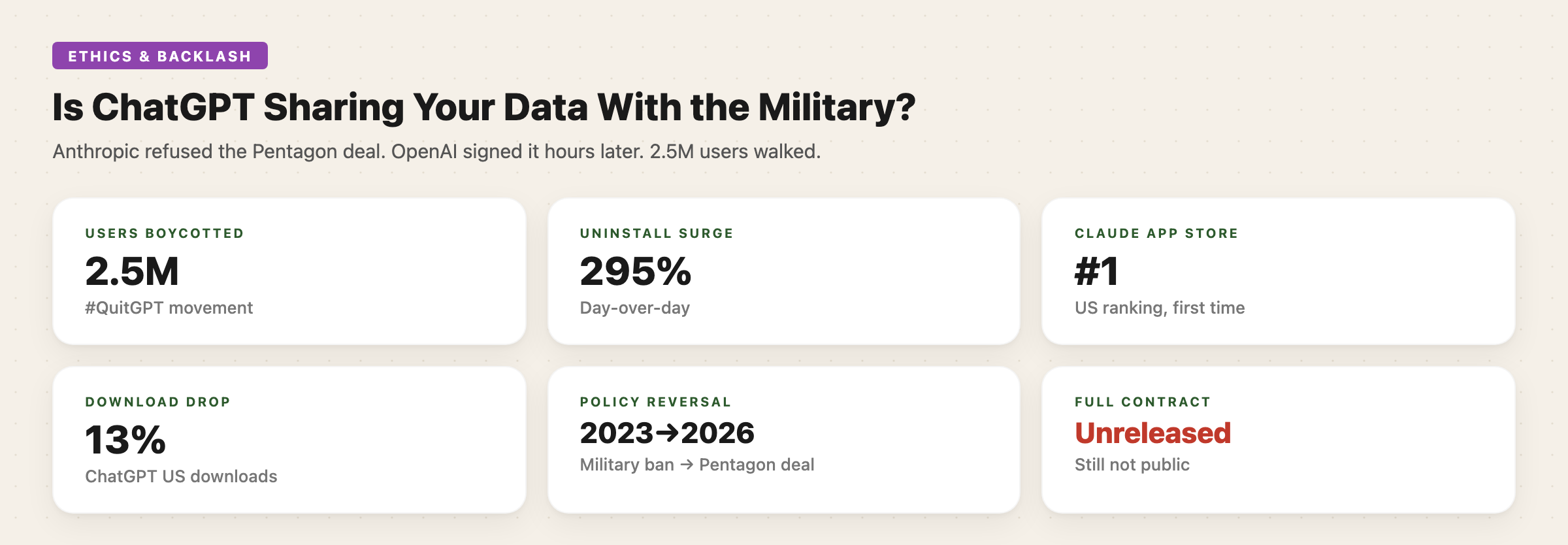

OpenAI signed a deal to deploy AI on Pentagon classified networks — hours after Anthropic refused the same deal and got blacklisted by the Trump administration. The #QuitGPT movement exploded: 2.5 million users boycotted, ChatGPT uninstalls surged 295%, and Claude hit #1 on the US App Store. Altman backtracked within days, adding surveillance restrictions. But the full contract hasn’t been released, and critics say the safeguards have loopholes you could drive a tank through.

What Happened

The story starts with Anthropic. In late February 2026, the Pentagon wanted AI companies to agree to “any lawful use” of their technology on classified military networks. Anthropic CEO Dario Amodei refused, writing that the company “cannot in good conscience accede” to the request — specifically citing two red lines: mass domestic surveillance of Americans and fully autonomous weapons.

The response was swift and unprecedented. President Trump ordered all federal agencies to stop using Anthropic technology within six months. Secretary of Defense Pete Hegseth designated Anthropic a “supply-chain risk” — a label normally reserved for foreign adversaries, never before applied to an American company.

Hours later, on February 27, Sam Altman announced OpenAI had signed the deal the Pentagon wanted. He posted on X that the Department of Defense had shown “deep respect for safety.” The contract allowed the Pentagon to use OpenAI’s AI for “all lawful purposes.”

The backlash was immediate. The #QuitGPT movement went viral across X, Reddit, and Bluesky. ChatGPT uninstalls surged 295% overnight. US downloads dropped 13% day-over-day. Claude shot to #1 on the US App Store for the first time. OpenAI’s robotics lead Caitlin Kalinowski resigned over the deal.

Within days, Altman admitted the deal “appeared opportunistic and haphazard” and published amendments: explicit bans on domestic surveillance of US persons, restrictions on using commercially acquired personal data, and exclusion of intelligence agencies like the NSA from the current agreement.

The Numbers

| Metric | Value |

|---|---|

| Users who boycotted/cancelled | ~2.5 million |

| ChatGPT uninstall surge | 295% day-over-day |

| US download drop | 13% day-over-day |

| Claude App Store ranking | #1 (US, first time ever) |

| Estimated contract value | $500M–$2B over 5 years |

| Anthropic phase-out window | 6 months |

| OpenAI’s original military ban | Removed January 2024 |

| Time between Anthropic refusal and OpenAI deal | Hours |

What the Contract Actually Says

Prohibited (after amendments):

- Domestic surveillance of US citizens or residents

- Tracking through commercially acquired personal data

- Use by NSA or other intelligence agencies (requires separate deal)

- Independently directing autonomous weapons where law requires human control

Allowed:

- “All lawful purposes” on classified networks

- Military operations with human decision-makers in the loop

- Intelligence activities complying with the Patriot Act, FISA, and Executive Order 12333

Enforcement:

- Cloud-only deployment architecture

- Cleared OpenAI engineers embedded with military

- Cleared safety researchers “in the loop”

- OpenAI says it can independently verify red lines aren’t crossed via classifiers

What Critics Say

The core criticism is that the safeguards sound better than they are. A former DOJ national security attorney told The Intercept: “There is nothing OpenAI can do to clarify this except release the contract.” The full contract has not been made public.

The EFF argues that existing laws like the Patriot Act already allow certain surveillance of Americans under provisions targeting foreign citizens. The contract prohibits “intentional” domestic surveillance — but critics note that incidental collection of American data during foreign intelligence operations is already standard practice.

The “any lawful use” language is the crux of the debate. Laws can change, and the DOD retains authority to modify its own policies. OpenAI says having restrictions codified in the contract protects against future changes — but legal scholars point out that contractual terms between a private company and the federal government have limited enforceability.

One former Pentagon official told The Intercept anonymously: “If you trust the cabal of Sam Altman, Donald Trump, and Pete Hegseth, there’s nothing I can do for you.”

The Bigger Picture

This wasn’t just a PR crisis — it reshaped the competitive landscape. Anthropic got blacklisted but won massive public trust. OpenAI got the contract but took a reputational hit that’s still reverberating. The QuitGPT movement demonstrated that AI users are paying attention to ethics in ways that directly impact revenue.

OpenAI’s journey on military use has been a complete reversal. In 2023, its policy explicitly banned use “for military, weapons, or warfare purposes.” That ban was quietly removed in January 2024. By February 2026, the company was deploying on classified Pentagon networks.

Whether the safeguards hold depends on factors no one outside classified networks can verify. The public is being asked to trust written assurances from a company that has reversed its own principles, overseen by a government that blacklisted the company that said no.

Follow Synvoya for daily AI news summaries — quick reads, no fluff.