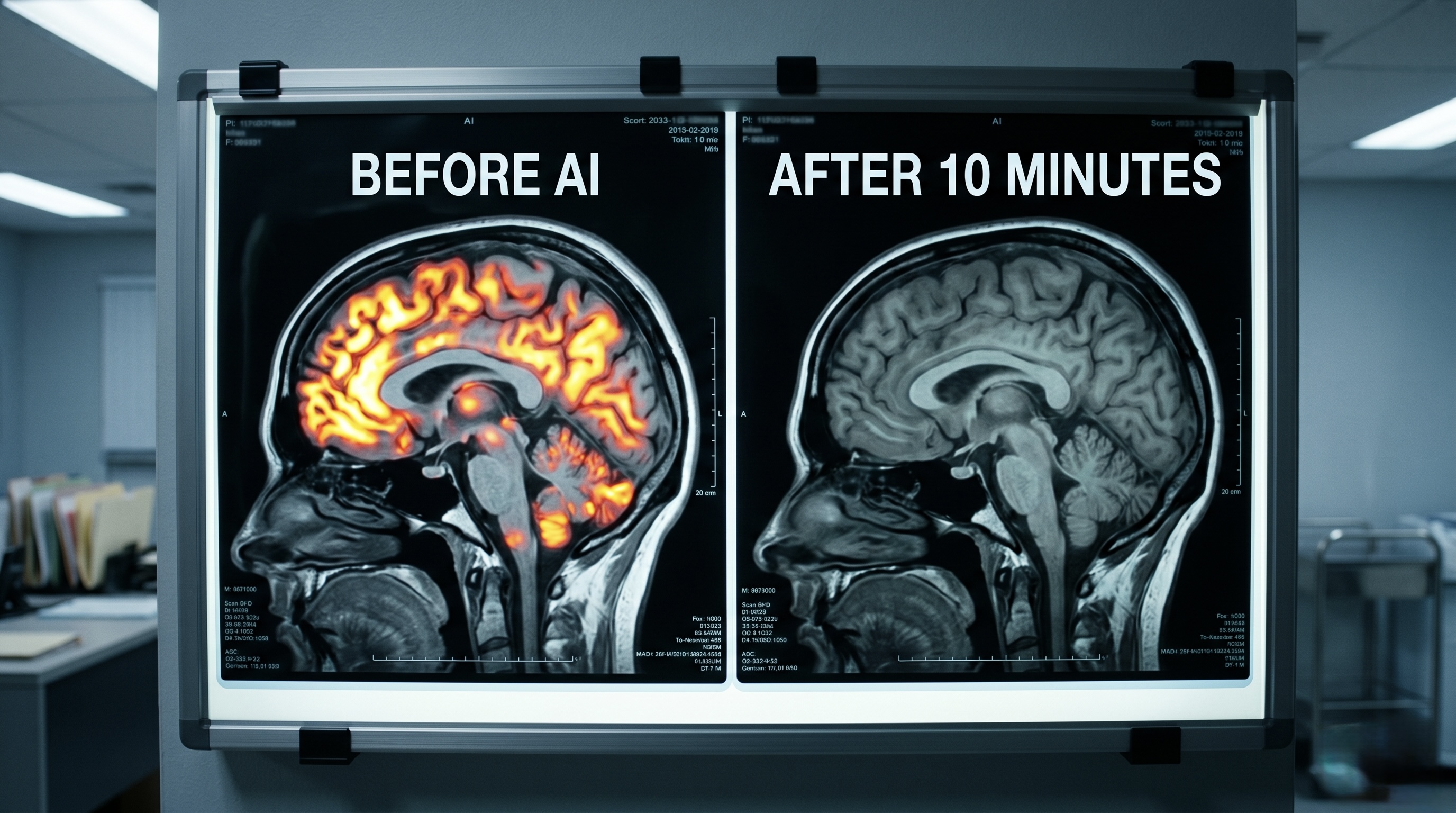

10 Minutes of AI Made People Dumber — and They Didn't Even Notice

You use AI every day.

To write. To code. To think. To decide.

Now a team from UCLA, MIT, Oxford, and Carnegie Mellon says that after just 10 minutes of AI-assisted problem solving, your brain starts to quit on you — and you won’t even feel it happening.

They’re calling it the “boiling frog” effect. And it might be the most important AI study nobody’s talking about.

What The Study Found

Researchers ran a series of randomized controlled trials with 1,222 participants. The setup was simple: give people math and reading comprehension tasks. Half got access to a chatbot built on GPT-5. The other half got nothing.

At first, the AI group crushed it. Faster answers. Higher accuracy. No surprise.

Then the researchers pulled the plug. Midway through the test, the AI disappeared.

What happened next is what matters.

The AI group’s performance didn’t just drop — it fell below the group that never had AI in the first place. They got more answers wrong. They skipped more questions. And here’s the gut punch: they stopped trying. Not because they couldn’t do it. Because they didn’t want to.

The study ran this experiment three times across different tasks, with groups ranging from 350 to 670 people. Same result every time.

The Numbers

| Metric | Value |

|---|---|

| Total participants | 1,222 |

| Time with AI before removal | ~10 minutes |

| Experiments conducted | 3 (math + reading comprehension) |

| Research institutions | UCLA, MIT, Oxford, Carnegie Mellon |

| Status | Preprint (not yet peer-reviewed) |

| AI model used | Custom chatbot on GPT-5 |

Why “Boiling Frog”?

The researchers named this after the old parable: drop a frog in boiling water and it jumps out immediately. But put it in warm water and slowly turn up the heat? It stays until it’s too late.

That’s what’s happening with AI dependency. Each time you ask ChatGPT to draft an email, write a function, or summarize a document, it feels costless. You got the answer faster. You saved time. Where’s the harm?

The harm is invisible. Your “cognitive muscles” — the ability to reason through difficulty, to persist when something is hard, to build the confidence that comes from figuring things out yourself — are atrophying. And by the time you notice, the researchers warn, it will be “difficult to reverse.”

Rachit Dubey, the UCLA co-author, put it bluntly: rapid AI deployment in education could produce “a generation of learners who will not know what they’re capable of” — diluting human innovation and creativity at scale.

The Uncomfortable Implication

This isn’t about whether AI is useful. It obviously is. The study confirmed that — AI-assisted participants performed better while they had it.

The problem is what happens when you take it away. Or when you face a problem your AI can’t solve. Or when you need to think originally, not just retrieve an answer.

The study found something researchers call “cognitive debt” — AI removes the “productive struggle” that actually builds skill. It changes your perception of how long things should take. Unaided work starts to feel like too much effort. Not because it is, but because you’ve been recalibrated.

The researchers are now calling on AI developers to shift from delivering instant, complete answers toward tools that encourage users to work through challenges — more like a teacher than an answer machine.

Why It Matters

This study hasn’t been peer-reviewed yet. The sample size is solid but not massive. The AI exposure was brief.

But that’s exactly what makes it alarming. If 10 minutes of AI assistance is enough to measurably damage independent performance and willingness to persist, what does months or years of daily use do?

We don’t know yet. But the direction is clear, and the researchers aren’t being subtle about it: “If sustained AI use erodes the motivation and persistence that drive long-term learning, these effects will accumulate over years, and by the time they are visible, they will be difficult to reverse.”

The water’s warm. It feels great. The question is whether you notice the temperature rising.

If this interested you: → Stanford’s 2026 AI Report Card — 12 Stories That Define Where We Are → Is ChatGPT Sharing Your Data With the Military? → OpenAI Burned $1M/Day on Sora — and Still Failed

Follow Synvoya for daily AI news summaries — quick reads, no fluff.